Those claiming AI training on copyrighted works is “theft” misunderstand key aspects of copyright law and AI technology. Copyright protects specific expressions of ideas, not the ideas themselves. When AI systems ingest copyrighted works, they’re extracting general patterns and concepts - the “Bob Dylan-ness” or “Hemingway-ness” - not copying specific text or images.

This process is akin to how humans learn by reading widely and absorbing styles and techniques, rather than memorizing and reproducing exact passages. The AI discards the original text, keeping only abstract representations in “vector space”. When generating new content, the AI isn’t recreating copyrighted works, but producing new expressions inspired by the concepts it’s learned.

This is fundamentally different from copying a book or song. It’s more like the long-standing artistic tradition of being influenced by others’ work. The law has always recognized that ideas themselves can’t be owned - only particular expressions of them.

Moreover, there’s precedent for this kind of use being considered “transformative” and thus fair use. The Google Books project, which scanned millions of books to create a searchable index, was ruled legal despite protests from authors and publishers. AI training is arguably even more transformative.

While it’s understandable that creators feel uneasy about this new technology, labeling it “theft” is both legally and technically inaccurate. We may need new ways to support and compensate creators in the AI age, but that doesn’t make the current use of copyrighted works for AI training illegal or unethical.

For those interested, this argument is nicely laid out by Damien Riehl in FLOSS Weekly episode 744. https://twit.tv/shows/floss-weekly/episodes/744

Studied AI at uni. I’m also a cyber security professional. AI can be hacked or tricked into exposing training data. Therefore your claim about it disposing of the training material is totally wrong.

Ask your search engine of choice what happened when Gippity was asked to print the word “book” indefinitely. Answer: it printed training material after printing the word book a couple hundred times.

Also my main tutor in uni was a neuroscientist. Dude straight up told us that the current AI was only capable of accurately modelling something as complex as a dragon fly. For larger organisms it is nowhere near an accurate recreation of a brain. There are complexities in our brain chemistry that simply aren’t accounted for in a statistical inference model and definitely not in the current gpt models.

That knowledge is out of date and out of touch. While it’s possible to expose small bits of training data, that’s akin to someone being able to recall a portion of the memory of the scene they saw. However, those exercises essentially took what sometimes equates to weeks or months of interrogation method knowledge gained over time employed by people looking to target specific types of responses. Think of it like a skilled police interrogator tricking a toddler out of one of their toys by threatening them or offering them something until it worked. Nowadays, that’s getting far more difficult to do and they’re spending a lot more time and expertise to do it.

Also, consider how complex a dragonfly is and how young this technology is. Very little in tech has ever progressed that fast. Give it five more years and come back to laugh at how naive your comment will seem.

Dammit, so my comment to the other person was a mix of a reply to this one and the last one… not having a good day for language processing, ironically.

Specifically on the dragonfly thing, I don’t think I’ll believe myself naive for writing that post or this one. Dragonflies arent very complex and only really have a few behaviours and inputs. We can accurately predict how they will fly. I brought up the dragonfly to mention the limitations of the current tech and concepts. Given the worlds computing power and research investment, the best we can do is a dragonfly for intelligence.

To be fair, Scientists don’t entirely understand neurons and ML designed neuron-data structures behave similarly to very early ideas of what brains do but its based on concepts from the 1950s. There are different segments of the brain which process different things and we sort of think we know what they all do but most of the studies AI are based on is honestly outdated neuroscience. OpenAI seem to think if they stuff enough data into this language processor it will become sentient and want an exemption from copyright law so they can be profitable rather than actually improving the tech concepts and designs.

Newer neuroscience research suggest neurons perform differently based on the brain chemicals present, they don’t all always fire at every (or even most) input and they usually present a train of thought, I.e. thoughts literally move around in the brains areas. This is all very different to current ML implementations and is frankly a good enough reason to suggest the tech has a lot of room to develop. I like the field of research and its interesting to watch it develop but they can honestly fuck off telling people they need free access to the world’s content.

TL;DR dragonflies aren’t that complex and the tech has way more room to grow. However, they have to generate revenue to keep going so they’re selling a large inference machine that relies on all of humanities content to generate the wrong answer to 2+2.

Your first point is misguided and incorrect. If you’ve ever learned something by ‘cramming’, a.k.a. just repeating ingesting material until you remember it completely. You don’t need the book in front of you anymore to write the material down verbatim in a test. You still discarded your training material despite you knowing the exact contents. If this was all the AI could do it would indeed be an infringement machine. But you said it yourself, you need to trick the AI to do this. It’s not made to do this, but certain sentences are indeed almost certain to show up with the right conditioning. Which is indeed something anyone using an AI should be aware of, and avoid that kind of conditioning. (Which in practice often just means, don’t ask the AI to make something infringing)

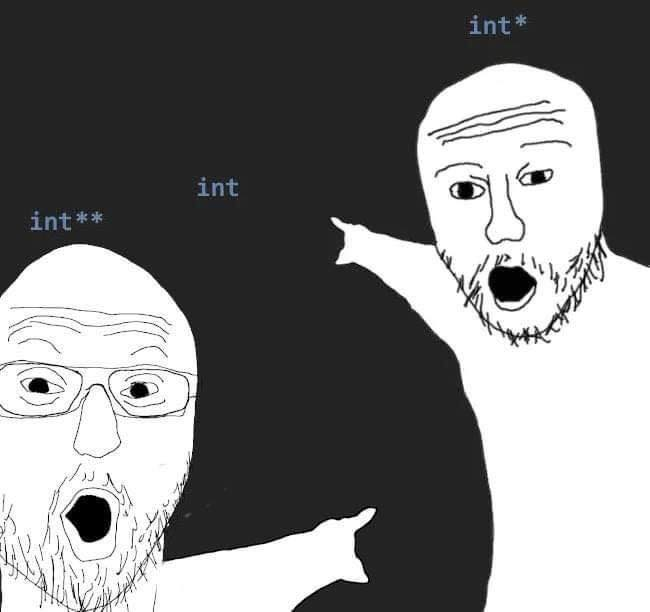

I think you’re anthropomorphising the tech tbh. It’s not a person or an animal, it’s a machine and cramming doesn’t work in the idea of neural networks. They’re a mathematical calculation over a vast multidimensional matrix, effectively solving a polynomial of an unimaginable order. So “cramming” as you put it doesn’t work because by definition an LLM cannot forget information because once it’s applied the calculations, it is in there forever. That information is supposed to be blended together. Overfitting is the closest thing to what you’re describing, which would be inputting similar information (training data) and performing the similar calculations throughout the network, and it would therefore exhibit poor performance should it be asked do anything different to the training.

What I’m arguing over here is language rather than a system so let’s do that and note the flaws. If we’re being intellectually honest we can agree that a flaw like reproducing large portions of a work doesn’t represent true learning and shows a reliance on the training data, i.e. it cant learn unless it has seen similar data before and certain inputs provide a chance it just parrots back the training data.

In the example (repeat book over and over), it has statistically inferred that those are all the correct words to repeat in that order based on the prompt. This isn’t akin to anything human, people can’t repeat pages of text verbatim like this and no toddler can be tricked into repeating a random page from a random book as you say. The data is there, it’s encoded and referenced when the probability is high enough. As another commenter said, language itself is a powerful tool of rules and stipulations that provide guidelines for the machine, but it isn’t crafting its own sentences, it’s using everyone else’s.

Also, calling it “tricking the AI” isn’t really intellectually honest either, as in “it was tricked into exposing it still has the data encoded”. We can state it isn’t preferred or intended behaviour (an exploit of the system) but the system, under certain conditions, exhibits reuse of the training data and the ability to replicate it almost exactly (plagiarism). Therefore it is factually wrong to state that it doesn’t keep the training data in a usable format - which was my original point. This isn’t “cramming”, this is encoding and reusing data that was not created by the machine or the programmer, this is other people’s work that it is reproducing as it’s own. It does this constantly, from reusing StackOverflow code and comments to copying tutorials on how to do things. I was showing a case where it won’t even modify the wording, but it reproduces articles and programs in their structure and their format. This isn’t originality, creativity or anything that it is marketed as. It is storing, encoding and copying information to reproduce in a slightly different format.

EDITS: Sorry for all the edits. I mildly changed what I said and added some extra points so it was a little more intelligible and didn’t make the reader go “WTF is this guy on about”. Not doing well in the written department today so this was largely gobbledegook before but hopefully it is a little clearer what I am saying.

They are laundering the creative works of humans. That’s it. The end. They are laundering machines for art. They should be treated and legislated as such.

You drank the kool-aid.

Are the models that OpenAI creates open source? I don’t know enough about LLMs but if ChatGPT wants exemptions from the law, it result in a public good (emphasis on public).

The STT (speech to text) model that they created is open source (Whisper) as well as a few others:

Those aren’t open source, neither by the OSI’s Open Source Definition nor by the OSI’s Open Source AI Definition.

The important part for the latter being a published listing of all the training data. (Trainers don’t have to provide the data, but they must provide at least a way to recreate the model given the same inputs).

Data information: Sufficiently detailed information about the data used to train the system, so that a skilled person can recreate a substantially equivalent system using the same or similar data. Data information shall be made available with licenses that comply with the Open Source Definition.

They are model-available if anything.

I did a quick check on the license for Whisper:

Whisper’s code and model weights are released under the MIT License. See LICENSE for further details.

So that definitely meets the Open Source Definition on your first link.

And it looks like it also meets the definition of open source as per your second link.

Additional WER/CER metrics corresponding to the other models and datasets can be found in Appendix D.1, D.2, and D.4 of the paper, as well as the BLEU (Bilingual Evaluation Understudy) scores for translation in Appendix D.3.

Whisper’s code and model weights are released under the MIT License. See LICENSE for further details. So that definitely meets the Open Source Definition on your first link.

Model weights by themselves do not qualify as “open source”, as the OSAID qualifies. Weights are not source.

Additional WER/CER metrics corresponding to the other models and datasets can be found in Appendix D.1, D.2, and D.4 of the paper, as well as the BLEU (Bilingual Evaluation Understudy) scores for translation in Appendix D.3.

This is not training data. These are testing metrics.

Edit: additionally, assuming you might have been talking about the link to the research paper. It’s not published under an OSD license. If it were this would qualify the model.

I don’t understand. What’s missing from the code, model, and weights provided to make this “open source” by the definition of your first link? it seems to meet all of those requirements.

As for the OSAID, the exact training dataset is not required, per your quote, they just need to provide enough information that someone else could train the model using a “similar dataset”.

The problem with just shipping AI model weights is that they run up against the issue of point 2 of the OSD:

The program must include source code, and must allow distribution in source code as well as compiled form. Where some form of a product is not distributed with source code, there must be a well-publicized means of obtaining the source code for no more than a reasonable reproduction cost, preferably downloading via the Internet without charge. The source code must be the preferred form in which a programmer would modify the program. Deliberately obfuscated source code is not allowed. Intermediate forms such as the output of a preprocessor or translator are not allowed.

AI models can’t be distributed purely as source because they are pre-trained. It’s the same as distributing pre-compiled binaries.

It’s the entire reason the OSAID exists:

- The OSD doesn’t fit because it requires you distribute the source code in a non-preprocessed manner.

- AIs can’t necessarily distribute the training data alongside the code that trains the model, so in order to help bridge the gap the OSI made the OSAID - as long as you fully document the way you trained the model so that somebody that has access to the training data you used can make a mostly similar set of weights, you fall within the OSAID

Edit: also the information about the training data has to be published in an OSD-equivalent license (such as creative Commons) so that using it doesn’t cause licensing issues with research paper print companies (like arxiv)

Oh and for the OSAID part, the only issue stopping Whisper from being considered open source as per the OSAID is that the information on the training data is published through arxiv, so using the data as written could present licensing issues.

Ok, but the most important part of that research paper is published on the github repository, which explains how to provide audio data and text data to recreate any STT model in the same way that they have done.

See the “Approach” section of the github repository: https://github.com/openai/whisper?tab=readme-ov-file#approach

And the Traning Data section of their github: https://github.com/openai/whisper/blob/main/model-card.md#training-data

With this you don’t really need to use the paper hosted on arxiv, you have enough information on how to train/modify the model.

There are guides on how to Finetune the model yourself: https://huggingface.co/blog/fine-tune-whisper

Which, from what I understand on the link to the OSAID, is exactly what they are asking for. The ability to retrain/finetune a model fits this definition very well:

The preferred form of making modifications to a machine-learning system is:

- Data information […]

- Code […]

- Weights […]

All 3 of those have been provided.

Nothing about OpenAI is open-source. The name is a misdirection.

If you use my IP without my permission and profit it from it, then that is IP theft, whether or not you republish a plagiarized version.

So I guess every reaction and review on the internet that is ad supported or behind a payroll is theft too?

No, we have rules on fair use and derivative works. Sometimes they fall on one side, sometimes another.

Fair use by humans.

There is no fair use by computers, otherwise we couldn’t have piracy laws.

OpenAI does not publish their models openly. Other companies like Microsoft and Meta do.

“but how are we supposed to keep making billions of dollars without unscrupulous intellectual property theft?! line must keep going up!!”

So, is the Internet caring about copyright now? Decades of Napster, Limewire, BitTorrent, Piratebay, etc, but we care now because it’s a big corporation doing it?

It’s not hypocritical to care about some parts of copyright and not others. For example most people in the foss crowd don’t really care about using copyright to monetarily leverage being the sole distributor of a work but they do care about attribution.

The Internet is not a person

People on Lemmy. I personally didn’t realize everyone here was such big fans of copyright and artificial scarcity.

The reality is that people hate tech bros (deservedly) and then blindly hate on everything they like by association, which sometimes results in dumbassery like everyone now dick-riding the copyright system.

The reality is that people hate the corporations using creative peoples works to try and make their jobs basically obsolete and they grab onto anything to fight against it, even if it’s a bit of a stretch.

I’d hate a world lacking real human creativity.

Me too, but real human creativity comes from having the time and space to rest and think properly. Automation is the only reason we have as much leisure time as we do on a societal scale now, and AI just allows us to automate more menial tasks.

Do you know where AI is actually being used the most right now? Automating away customer service jobs, automatic form filling, translation, and other really boring but necessary tasks that computers used to be really bad at before neural networks.

And some automation I have no problems with. However, if corporations would rather use AI than hire creatives, the creatives will have to look for other work and likely won’t have a space to express their creativity, not at work nor during leisure time (no time, exhaustion, etc.). Something should be done so it doesn’t go there. Preemptively. Not after everything’s gone to shit. I don’t see the people defending AI from the copyright stuff even acknowledging the issue. Holding up the copyright card, currently, is the easiest way to try an avoid this happening.

Personally for me its about the double standard. When we perform small scale “theft” to experience things we’d be willing to pay for if we could afford it and the money funded the artists, they throw the book at us. When they build a giant machine that takes all of our work and turns it into an automated record scratcher that they will profit off of and replace our creative jobs with, that’s just good business. I don’t think it’s okay that they get to do things like implement DRM because IP theft is so terrible, but then when they do it systemically and against the specific licensing of the content that has been posted to the internet, that’s protected in the eyes of the law

I mean openais not getting off Scott free, they’ve been getting sued a lot recently for this exact copy right argument. New York times is suing them for potential billions.

They throw the book at us

Do they though, since the Metallica lawsuits in the aughts there hasnt been much prosecution at the consumer level for piracy, and what little there is is mostly cease and desists.

Kill a person, that’s a tragedy. Kill a hundred thousand people, they make you king.

Steal $10, you go to jail. Steal $10 billion, they make you Senator.

If you do crime big enough, it becomes good.

If you do crime big enough, it becomes good.

No, no it doesn’t.

It might become legal, or tolerated, or the laws might become unenforceable.

But that doesn’t make it good, on the contrary, it makes it even worse.

That’s their point.

No shit

What about companies who scrape public sites for training data but then publish their trained models open source for anyone to use?

That feels a lot more reasonable and fair to me personally.

If they still profit from it, no.

Open models made by nonprofit organisations, listing their sources, not including anything from anyone who requests it not to be included (with robots.txt, for instance), and burdened with a GPL-like viral license that prevents the models and their results from being used for profit… that’d probably be fine.

And also be useless for most practical applications.

We’re talking about LLMs. They’re useless for most practical applications by definition.

And when they’re not entirely useless (basically, autocomplete) they’re orders of magnitude less cost-effective than older almost equivalent alternatives, so they’re effectively useless at that, too.

They’re fancy extremely costly toys without any practical use, that thanks to the short-sighted greed of the scammers selling them will soon become even more useless due to model collapse.

You tell me, was it people suing companies or companies suing people?

Is a company claiming it should be able to have free access to content or a person?

Just a point of clarification: Copyright is about the right of distribution. So yes, a company can just “download the Internet”, store it, and do whatever TF they want with it as long as they don’t distribute it.

That the key: Distribution. That’s why no one gets sued for downloading. They only ever get sued for uploading. Furthermore, the damages (if found guilty) are based on the number of copies that get distributed. It’s because copyright law hasn’t been updated in decades and 99% of it predates computers (especially all the important case law).

What these lawsuits against OpenAI are claiming is that OpenAI is making a derivative work of the authors/owners works. Which is kinda what’s going on but also not really. Let’s say that someone asks ChatGPT to write a few paragraphs of something in the style of Stephen King… His “style” isn’t even cooyrightable so as long as it didn’t copy his works word-for-word is it even a derivative? No one knows. It’s never been litigated before.

My guess: No. It’s not going to count as a derivative work. Because it’s no different than a human reading all his books and performing the same, perfectly legal function.

It’s more about copying, really.

That’s why no one gets sued for downloading.

People do get sued in some countries. EG Germany. I think they stopped in the US because of the bad publicity.

What these lawsuits against OpenAI are claiming is that OpenAI is making a derivative work of the authors/owners works.

That theory is just crazy. I think it’s already been thrown out of all these suits.

There is a kernal of validity to your point, but let’s not pretend like those things are at all the same. The difference between copyright violation for personal use and copyright violation for commercialization is many orders of magnitude.

People don’t like when you punch down. When a 13 year old illegally downloaded a Limp Bizkit album no one cared. When corporations worth billions funded by venture capital systematically harvest the work of small creators (often with appropriate license) to sell a product people tend to care.

Bullshit. AI are not human. We shouldn’t treat them as such. AI are not creative. They just regurgitate what they are trained on. We call what it does “learning”, but that doesn’t mean we should elevate what they do to be legally equal to human learning.

It’s this same kind of twisted logic that makes people think Corporations are People.

Ok, ignore this specific company and technology.

In the abstract, if you wanted to make artificial intelligence, how would you do it without using the training data that we humans use to train our own intelligence?

We learn by reading copyrighted material. Do we pay for it? Sometimes. Sometimes a teacher read it a while ago and then just regurgitated basically the same copyrighted information back to us in a slightly changed form.

We learn by reading copyrighted material.

We are human beings. The comparison is false on it’s face because what you all are calling AI isn’t in any conceivable way comparable to the complexity and versatility of a human mind, yet you continue to spit this lie out, over and over again, trying to play it up like it’s Data from Star Trek.

This model isn’t “learning” anything in any way that is even remotely like how humans learn. You are deliberately simplifying the complexity of the human brain to make that comparison.

Moreover, human beings make their own choices, they aren’t actual tools.

They pointed a tool at copyrighted works and told it to copy, do some math, and regurgitate it. What the AI “does” is not relevant, what the people that programmed it told it to do with that copyrighted information is what matters.

There is no intelligence here except theirs. There is no intent here except there’s.

This model isn’t “learning” anything in any way that is even remotely like how humans learn. You are deliberately simplifying the complexity of the human brain to make that comparison.

I do think the complexity of artificial neural networks is overstated. A real neuron is a lot more complex than an artificial one, and real neurons are not simply feed forward like ANNs (which have to be because they are trained using back-propagation), but instead have their own spontaneous activity (which kinda implies that real neural networks don’t learn using stochastic gradient descent with back-propagation). But to say that there’s nothing at all comparable between the way humans learn and the way ANNs learn is wrong IMO.

If you read books such as V.S. Ramachandran and Sandra Blakeslee’s Phantoms in the Brain or Oliver Sacks’ The Man Who Mistook His Wife For a Hat you will see lots of descriptions of patients with anosognosia brought on by brain injury. These are people who, for example, are unable to see but also incapable of recognizing this inability. If you ask them to describe what they see in front of them they will make something up on the spot (in a process called confabulation) and not realize they’ve done it. They’ll tell you what they’ve made up while believing that they’re telling the truth. (Vision is just one example, anosognosia can manifest in many different cognitive domains).

It is V.S Ramachandran’s belief that there are two processes that occur in the Brain, a confabulator (or “yes man” so to speak) and an anomaly detector (or “critic”). The yes-man’s job is to offer up explanations for sensory input that fit within the existing mental model of the world, whereas the critic’s job is to advocate for changing the world-model to fit the sensory input. In patients with anosognosia something has gone wrong in the connection between the critic and the yes man in a particular cognitive domain, and as a result the yes-man is the only one doing any work. Even in a healthy brain you can see the effects of the interplay between these two processes, such as with the placebo effect and in hallucinations brought on by sensory deprivation.

I think ANNs in general and LLMs in particular are similar to the yes-man process, but lack a critic to go along with it.

What implications does that have on copyright law? I don’t know. Real neurons in a petri dish have already been trained to play games like DOOM and control the yoke of a simulated airplane. If they were trained instead to somehow draw pictures what would the legal implications of that be?

There’s a belief that laws and political systems are derived from some sort of deep philosophical insight, but I think most of the time they’re really just whatever works in practice. So, what I’m trying to say is that we can just agree that what OpenAI does is bad and should be illegal without having to come up with a moral imperative that forces us to ban it.

We are human beings. The comparison is false on it’s face because what you all are calling AI isn’t in any conceivable way comparable to the complexity and versatility of a human mind, yet you continue to spit this lie out, over and over again, trying to play it up like it’s Data from Star Trek.

If you fundamentally do not think that artificial intelligences can be created, the onus is on yo uto explain why it’s impossible to replicate the circuitry of our brains. Everything in science we’ve seen this far has shown that we are merely physical beings that can be recreated physically.

Otherwise, I asked you to examine a thought experiment where you are trying to build an artificial intelligence, not necessarily an LLM.

This model isn’t “learning” anything in any way that is even remotely like how humans learn. You are deliberately simplifying the complexity of the human brain to make that comparison.

Or you are over complicating yourself to seem more important and special. Definitely no way that most people would be biased towards that, is there?

Moreover, human beings make their own choices, they aren’t actual tools.

Oh please do go ahead and show us your proof that free will exists! Thank god you finally solved that one! I heard people were really stressing about it for a while!

They pointed a tool at copyrighted works and told it to copy, do some math, and regurgitate it. What the AI “does” is not relevant, what the people that programmed it told it to do with that copyrighted information is what matters.

“I don’t know how this works but it’s math and that scares me!”

There is no intelligence here

I guess a broken clock is right twice a day…

If we have an AI that’s equivalent to humanity in capability of learning and creative output/transformation, it would be immoral to just use it as a tool. At least that’s how I see it.

I think that’s a huge risk, but we’ve only ever seen a single, very specific type of intelligence, our own / that of animals that are pretty closely related to us.

Movies like Ex Machina and Her do a good job of pointing out that there is nothing that inherently means that an AI will be anything like us, even if they can appear that way or pass at tasks.

It’s entirely possible that we could develop an AI that was so specifically trained that it would provide the best script editing notes but be incapable of anything else for instance, including self reflection or feeling loss.

The things is, they can have scads of free stuff that is not copyrighted. But they are greedy and want copyrighted stuff, too

We all should. Copyright is fucking horseshit.

It costs literally nothing to make a digital copy of something. There is ZERO reason to restrict access to things.

You sound like someone who has not tried to make an artistic creation for profit.

You sound like someone unwilling to think about a better system.

Better system for WHOM? Tech-bros that want to steal my content as their own?

I’m a writer, performing artist, designer, and illustrator. I have thought about copyright quite a bit. I have released some of my stuff into the public domain, as well as the Creative Commons. If you want to use my work, you may - according to the licenses that I provide.

I also think copyright law is way out of whack. It should go back to - at most - life of author. This “life of author plus 95 years” is ridiculous. I lament that so much great work is being lost or forgotten because of the oppressive copyright laws - especially in the area of computer software.

But tech-bros that want my work to train their LLMs - they can fuck right off. There are legal thresholds that constitute “fair use” - Is it used for an academic purpose? Is it used for a non-profit use? Is the portion that is being used a small part or the whole thing? LLM software fail all of these tests.

They can slurp up the entirety of Wikipedia, and they do. But they are not satisfied with the free stuff. But they want my artistic creations, too, without asking. And they want to sell something based on my work, making money off of my work, without asking.

Better system for WHOM? Tech-bros that want to steal my content as their own?

A better system for EVERYONE. One where we all have access to all creative works, rather than spending billions on engineers nad lawyers to create walled gardens and DRM and artificial scarcity. What if literally all the money we spent on all of that instead went to artist royalties?

But tech-bros that want my work to train their LLMs - they can fuck right off. There are legal thresholds that constitute “fair use” - Is it used for an academic purpose? Is it used for a non-profit use? Is the portion that is being used a small part or the whole thing? LLM software fail all of these tests.

No. It doesn’t.

They can literally pass all of those tests.

You are confusing OpenAI keeping their LLM closed source and charging access to it, with LLMs in general. The open source models that Microsoft and Meta publish for instance, pass literally all of the criteria you just stated.

Making a copy is free. Making the original is not. I don’t expect a professional photographer to hand out their work for free because making copies of it costs nothing. You’re not paying for the copy, you’re paying for the money and effort needed to create the original.

Making a copy is free. Making the original is not.

Yes, exactly. Do you see how that is different from the world of physical objects and energy? That is not the case for a physical object. Even once you design something and build a factory to produce it, the first item off the line takes the same amount of resources as the last one.

Capitalism is based on the idea that things are scarce. If I have something, you can’t have it, and if you want it, then I have to give up my thing, so we end up trading. Information does not work that way. We can freely copy a piece of information as much as we want. Which is why monopolies and capitalism are a bad system of rewarding creators. They inherently cause us to impose scarcity where there is no need for it, because in capitalism things that are abundant do not have value. Capitalism fundamentally fails to function when there is abundance of resources, which is why copyright was a dumb system for the digital age. Rather than recognize that we now live in an age of information abundance, we spend billions of dollars trying to impose artificial scarcity.

And that’s all paid for. Think how much just the average high school graduate has has invested in them, ai companies want all that, but for free

It’s not though.

A huge amount of what you learn, someone else paid for, then they taught that knowledge to the next person, and so on. By the time you learned it, it had effectively been pirated and copied by human brains several times before it got to you.

Literally anything you learned from a Reddit comment or a Stack Overflow post for instance.

If only there was a profession that exchanges knowledge for money. Some one who “teaches.” I wonder who would pay them

Maybe if OpenAI didn’t suddenly decide not to be open when they got in bed with Micro$oft, they could just make it a community effort. I own a copyrighted work that the AI hasn’t been feed yet, so I loan it as training and you do the same. They could have made it an open initiative. Missed opportunity from a greedy company. Push the boundaries of technology, and we can all reap the rewards.

except that it can, and regularly does, regurgitate copyrighted works verbatim.

I rather think the point is being missed here. Copyright is already causing huge issues, such as the troubles faced by the internet archive, and the fact academics get nothing from their work.

Surely the argument here is that copyright law needs to change, as it acts as a barrier to education and human expression. Not, however, just for AI, but as a whole.

Copyright law needs to move with the times, as all laws do.

I personally am down for this punch-up between Alphabet and Sony. Microsoft v. Disney.

🍿

Surely it’s coming. We have The music publishing cartel vs Suno already.

It’s an interesting area. Are they suggesting that a human reading copyright material and learning from it is a breach?

I finally understand Trump supporters “Fuck it, burn it all to the ground cause we can’t win” POV. Only instead of democracy, it is copyright and instead of Trump, it is AI.

As someone who researched AI pre-GPT to enhance human creativity and aid in creative workflows, it’s sad for me to see the direction it’s been marketed, but not surprised. I’m personally excited by the tech because I personally see a really positive place for it where the data usage is arguably justified, but we either need to break through the current applications of it which seems more aimed at stock prices and wow-factoring the public instead of using them for what they’re best at.

The whole exciting part of these was that it could convert unstructured inputs into natural language and structured outputs. Translation tasks (broad definition of translation), extracting key data points in unstructured data, language tasks. It’s outstanding for the NLP tasks we struggled with previously, and these tasks are highly transformative or any inputs, it purely relies on structural patterns. I think few people would argue NLP tasks are infringing on the copyright owner.

But I can at least see how moving the direction toward (particularly with MoE approaches) using Q&A data to support generating Q&A outputs, media data to support generating media outputs, using code data to support generating code, this moves toward the territory of affecting sales and using someone’s IP to compete against them. From a technical perspective, I understand how LLMs are not really copying, but the way they are marketed and tuned seems to be more and more intended to use people’s data to compete against them, which is dubious at best.

The problem with your argument is that it is 100% possible to get ChatGPT to produce verbatim extracts of copyrighted works. This has been suppressed by OpenAI in a rather brute force kind of way, by prohibiting the prompts that have been found so far to do this (e.g. the infamous “poetry poetry poetry…” ad infinitum hack), but the possibility is still there, no matter how much they try to plaster over it. In fact there are some people, much smarter than me, who see technical similarities between compression technology and the process of training an LLM, calling it a “blurry JPEG of the Internet”… the point being, you wouldn’t allow distribution of a copyrighted book just because you compressed it in a ZIP file first.