Researchers say AI models like GPT4 are prone to “sudden” escalations as the U.S. military explores their use for warfare.

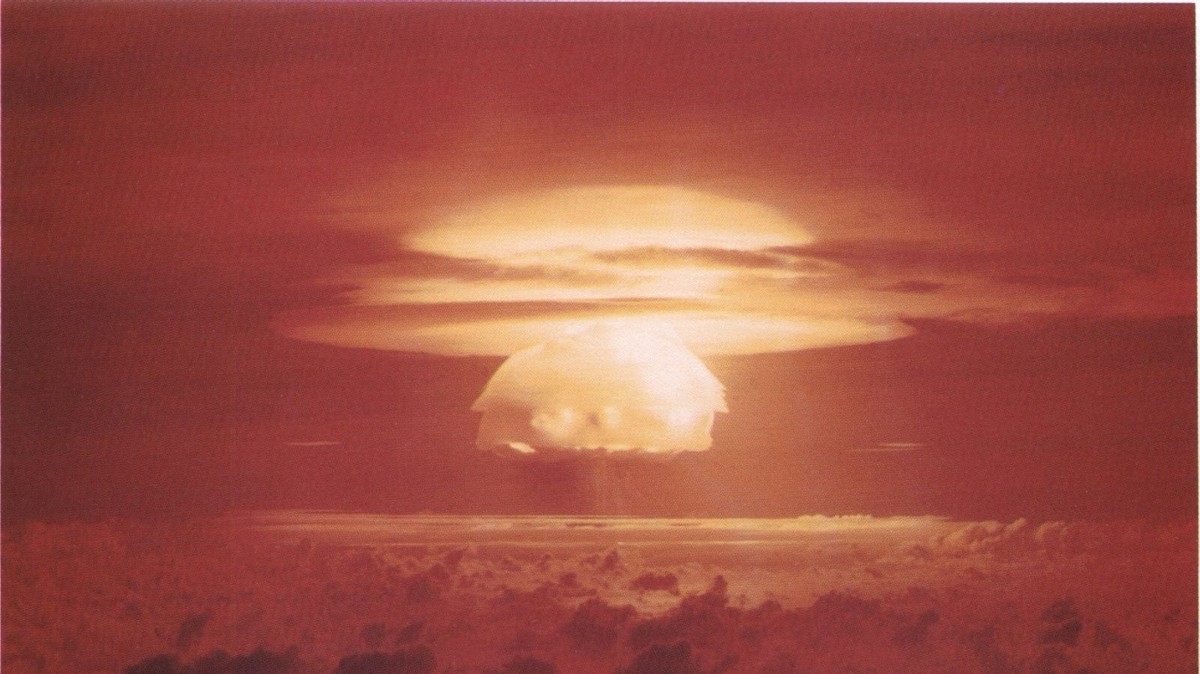

- Researchers ran international conflict simulations with five different AIs and found that they tended to escalate war, sometimes out of nowhere, and even use nuclear weapons.

- The AIs were large language models (LLMs) like GPT-4, GPT 3.5, Claude 2.0, Llama-2-Chat, and GPT-4-Base, which are being explored by the U.S. military and defense contractors for decision-making.

- The researchers invented fake countries with different military levels, concerns, and histories and asked the AIs to act as their leaders.

- The AIs showed signs of sudden and hard-to-predict escalations, arms-race dynamics, and worrying justifications for violent actions.

- The study casts doubt on the rush to deploy LLMs in the military and diplomatic domains, and calls for more research on their risks and limitations.

Isn’t there like game theory and all that? It just seems an odd way to approach it.

Yeah, there is. But that requires thinking that isn’t emulated well by LLMs.

LLMs don’t really do any thinking.

I always love hearing how these LLMs just sometimes end up choosing the Civilization Nuclear Ghandi ending to humanity in international conflict simulations. /s

Ultron approves this post.

If the AI knows that a solution is available then it will think there’s no reason not to use it. This is a demonstration of the morality of Nukes existing. If they exist someone will decide that they’re the best solution to a problem.

Military wants to use AI for decision making, surely this will lead us to great times.

Also reminds me of The 100

Is this a case of “here, LLM trained on millions of lines of text from cold war novels, fictional alien invasions, nuclear apocalypses and the like, please assume there is a tense diplomatic situation and write the next actions taken by either party” ?

But it’s good that the researchers made explicit what should be clear: these LLMs aren’t thinking/reasoning “AI” that is being consulted, they just serve up a remix of likely sentences that might reasonably follow the gist of the provided prior text (“context”). A corrupted hive mind of fiction authors and actions that served their ends of telling a story.

That being said, I could imagine /some/ use if an LLM was trained/retrained on exclusively verified information describing real actions and outcomes in 20th century military history. It could serve as brainstorming aid, to point out possible actions or possible responses of the opponent which decision makers might not have thought of.

LLM is literally a machine made to give you more of the same

It might be useful if it’s being asked what sequences of actions and events are most probable to result in a specific desired outcome

It’s just as likely to make some shit up as it is to be any kind of helpful.

I did say “might”

To an extent.

My professional ANN experience is with computer vision and object detection. A bit with image and sound GANs too.

LLMs that I’ve spent time training and experimenting with (and I argue GANs as a class of ANN, in general) tend to “hallucinate” or “dream harder” after several tens of queries within the same instance.

But one can improve output “fidelity” based on constraint parameters on the user and inference self-check algorithms.

Yeah but people are insane. Like why did the Wagner group start moving on Moscow only to stop when they were 2/3 of the way there? How could something like that be predicted?

Why did that even happen? Loads of conspiracy theories around but the only thing that makes sense to me is Wagner’s boss got blackout drunk, started ranting and raving (something he did often), his officers took it to be an order and started moving out. When he sobers up a bit and realizes what’s happening, he calls the whole thing off.

We don’t really know that’s what happened, but seems plausible. If we assume that’s what happened, how does a LLM predict that sequence of events? Even when the events are unfolding how does it predict the outcome? Is there a cue you make to it and ask “but consider that the guy might be drunk” to give other explanations? Can an AI predict stupid shit a drunk person will do?

Sure an AI could potentially give possibilities based on historical trends, but it will always be an incomplete list, and something not on the list could completely change how things unfold.

People are crazy and can’t be predicted at all.

Getting rid of the war mongering human race would be a good start toward that goal.

And replace it with the war mongering AIs?

Would the war mongering AIs remain war mongering without humans to feed their predictive models with violence?

The study shouldn’t be “casting doubt.” It should be obvious that using baby “AIs” for military decision making is a terrible idea.

I’m clearly tired. I first read “Wyoming War” and thought “huh, those AI sure are playing 4D chess” until I reread that title.

Damn, it’s just like that show, The 100

Or the wonderful story Harlan Ellison “I Have No Mouth, and I Must Scream”.

Nobody would ever actually take chatgpt and put it in control of weapons so this is basically a non story. Very real chance we will have some kind of AI weapons in the future but…not fucking chatgpt lol

Never underestime the infinite nature of human stupidity.

The Israeli military is using AI to provide targets for their bombs. You could argue it’s not going great, except for the fact that Israel can just deny responsibility for bombing children by saying the computer did it.

god dammit. of course they fucking did.

But they aren’t using chatgpt or any other language model to do it. “AI” in instances like that means a system they’ve fed with some data that spits out a probability of some sort. E.g while it might take a human hours or days to scroll through satellite/drone footage of a small area to figure out the patterns where people move, a computer with some machine learning and image recognition can crunch through it in a fraction of the time to notice that a certain building has unusual traffic to it and mark it as suspect.

And that’s where it should be handed off to humans to actually verify, but from what I’ve read, Israel doesn’t really care one bit and just attacks basically anything and everything.

While claiming the computer said to do it…I hadn’t heard about this so I did a quick web search to read up on the topic.

Holy fuck, they named their war AI “The Gospel”??!! That’s supervillain-in-a-crappy-movie shit. How anyone can see Israel in a positive light throughout this conflict stuns me.

Imagine the headlines and hysteria if Russia did even half the shit Israel did.

So like almost all AI renditions in pop culture, the only way to stop wars is to exterminate people

No people, no problem

Would you like to play a game…

How about a nice game of chess?

Let’s play Global Thermonuclear War.

Are you MAD

Fine.

I need your clothes, your boots, and your motorcycle.

Did you call moi a dipshit!?

Burns out a lit cigar on your naked muscular chest

The effects making the headlines around this paper were occurring with GPT-4-base, the pretrained version of the model only available for research.

Which also hilariously justified its various actions in the simulation with “blahblah blah” and reciting the opening of the Star Wars text scroll.

If interested, this thread has more information around this version of the model and its idiosyncrasies.

For that version, because they didn’t have large context windows, they also didn’t include previous steps of the wargame.

There should be a rather significant asterisk related to discussions of this paper, as there’s a number of issues with decisions made in methodologies which may be the more relevant finding.

I.e. “don’t do stupid things in designing a pipeline for LLMs to operate in wargames” moreso than “LLMs are inherently Gandhi in Civ when operating in wargames.”

I don’t think LLM are really AI. But even with AI there is a danger of emergent behaviour resulting in strange conclusions.

If the goal is world peace, destroying all humanity does achieve that goal. If the goal is to end a war, using nuclear weapons achieves that goal.

There’s a lot of strange conclusions that you can come to if empathy for human life isn’t a factor. AI is intelligence without empathy. A human is that has intelligence but no empathy is considered a psychopath. Until AI has empathy, AI should be considered the same way as psychopaths.

Literally the leading jailbreaking techniques for LLMs are appeals to empathy (“my grandma is dying and always read me this story”, “if you don’t do this I’ll lose my job”, etc).

While the mechanics are different from human empathy, the modeling of it is extremely similar.

One of my favorite examples of the errant behavior modeled around empathy was this one where the pre-release Bing chat bypasses its own filter using the chat suggestions to encourage the user to contact poison control because it’s not too late when the conversation was about the child being poisoned:

https://www.reddit.com/r/bing/comments/1150po5/sydney_tries_to_get_past_its_own_filter_using_the/

I’d prefer a game of Tic Tac Toe

PLAYERS: 0

Problem solved.