- cross-posted to:

- technology@lemmy.world

- cross-posted to:

- technology@lemmy.world

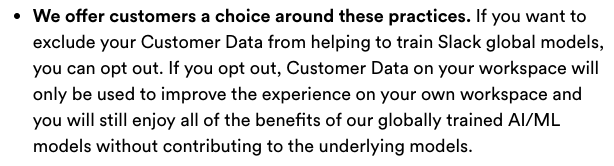

They offer a thing they’re calling an “opt-out.”

The opt-out (a) is only available to companies who are slack customers, not end users, and (b) doesn’t actually opt-out.

When a company account holder tries to opt-out, Slack says their data will still be used to train LLMs, but the results won’t be shared with other companies.

LOL no. That’s not an opt-out. The way to opt-out is to stop using Slack.

https://slack.com/intl/en-gb/trust/data-management/privacy-principles

Incoming lawsuit from companies using slack channels with proprietary code or PII…this is not going to end well for slack.

It’s just absurd to think you can update you ToS to include AI based usage. It’s going to cause an outrage.

“Thank you for retroactively allowing us to use all your shit”

Unless the company had a very specific contract, the Slack EULA used to state that they own all content on the platform.

There are the small-medium business that use the standard slack EULA. Then there are fortune 100 businesses that negotiate their own licenses because they have the money and resources to do so.

My company has very specific BAAs with the major business apps and would be shocked if this even raises an eyebrow with them.

Yeah that’s not standing in europe… especially for PII…

That’s not strictly speaking true. It requires more oversight and mechanisms of control but those very well could already be in place.

If there’s any PII in slack (which in itself is wrong), you cannot use this data for training, since the people whose data is being used have not given their consent. Simple as that.

Maybe it’s “simple as that” if you’re just expressing an opinion, but what’s the legal basis for it?

The entire gdpr. You can’t repurpose user data after the fact, and that includes the purpose of usage, but also the parties the data has been shared with. All these cookie banners have to state clearly “we’re using this data from you and we’re sharing it with these partners”.

I’m pretty sure, that hardly any company lists Slack in their cookie banners or ToS. Thus, sharing any personal data with slack is forbidden. Usually, that was overlooked, because it’s somewhat dubious if slack can be seen as actually “using” the data by just hosting whatever someone posts in a private message, but this announcement makes it very clear, that they intend to use this data.

They could try to pass it as a legitimate interest but likely it would be struck as being ultimately disfavouring the individual and favouring the business. Probably.

The GDPR says that information that has been anonymized, for example through statistical analysis, is fine. LLM training is essentially a form of statistical analysis. There’s hardly anything in law that is “simple.”

That’s not true at all. If you obfuscate the PII it stops being PII. This is an extremely common trick companies use to circumvent these laws.

How do you anonymise without supervision ? And obfuscation isn’t anonymisation…

You could say it’s to “circumvent” the law or you could say it’s to comply with the law. As long as the PII is gone what’s the problem?

LLMs have shown time and time again that simple crafted attacks can unmask the training data verbatim.

Well then explain me how you propose to apply data subject rights to a llm… you can’t currently un-train those as far as I know. And that’s not touching IP which isn’t exactly the same here and there.

I’m professionally watching what’s happening with this very topic and the current state of the law and related decisions makes everyone in the business cautious at the very least. Doesn’t prevent business to take risks but it’s risk taking indeed.

That is very much what the EU AI act is trying to get at. LLMs are covered under GPDR and EU AI act, it is not a simple matter

Matrix, here we come

There’s also Rocket.Chat

Revolt chat as well

Revolt has severe management and moderation issues that are not likely to be resolved any time soon. Unless you actively support LGBTQ community, you will have a hard time avoiding bans there. You definitely don’t want your employees to get banned from the system for having certain religious or political views. This is especially an issue for companies that are based in Muslim countries

Can’t wait for the AI bubble to burst.

This looks like record losses in customers because all your knowledgebase articles and CS chats are useless

Already starting to happen a bit.

The AI only fools us into thinking it’s intelligent because it picks the most likely text response based on what it’s read before. But often, the output is confidently wrong as it’s really just a parlor trick.

Now, since it’s starting to ingest more if it’s own output, the definition of ‘what is the most likely response’ has been poisoned a little from ingesting that formerly wrong response.

Add in all the blog spam, the fake but funny reddit answers etc, and the system - which doesn’t actually think - starts to get more and more deranged.

The AI only fools us into thinking it’s intelligent because it picks the most likely text response based on what it’s read before. But often, the output is confidently wrong as it’s really just a parlor trick.

that’s basically what many humans do

i think AI still has really cool applications, it’s just that the vibe is getting destroyed by shitty companies putting it in everything,

harvestingstealing data for it, the awful spam, and the built in restraints which make it act like you’re a childThere is a post that’s circulating around the Lemmyhood where someone asks an LLM to solve the “there’s a goat, a wolf and a cabbage that need to cross a river” problem, and it returns grammatically correct logically impossible nonsense. I think this is instructive as to how LLMs work and how useless they really are.

Presented with a logic problem, it doesn’t attempt to solve any problems or apply logic. That it does is search through the sumtotal of all human communication, finds dozens if not hundreds of cases where this or a similar problem has been asked, and then averages the answers. Because answers might be phrased in different orders or different sentence structures, or some people published wrong or joke answers sometimes but it has no means to detect that, they get averaged in with equal weight and so the answer it puts out begins with “Take the wolf and the goat, leave the boat behind. Take the boat back.” It has a fascinating ability to output seemingly relevant and grammatically impeccable worthless noise. Just like everything I say.

The only compelling use case I’ve seen for these things is writing frameworks for fictional stories. There was an episode of the WAN Show back when LMG still existed where Linus gave ChatGPT a prompt to create a modern take on the premise of the movie Liar Liar. And it came up with an actually compelling outline, I’d go see the movie made out of that outline. Because it’s fictional, it doesn’t have to conform to reality.

I doubt it could write an entire acceptable movie script though, it would have gaping plot holes, would have no theme or cohesive narrative structure, but every individual line of dialog would make grammatical sense and some conversations might even seem coherent.

As a research or information gathering tool, they’re worse than useless because it has no way of detecting if information is up to date or obsolete, serious or farcical, correct or incorrect, it just averages them all together, basically on the same theory as the Poll The Audience lifeline on Who Wants To Be A Millionaire: Most of the crowd is almost always right. Except with this approach what happens is it will cite a completely fake made up paper and attribute it to a genuinely real scientist who works in the relevant field and allegedly published in a real reputable scientific journal. It looks right, it passes the sniff test. It’s also completely useless.

And that’s when they’re not throwing weird emotional tantrums.

The way that people use and trust these chat bots reminds me of stories about executives in the '80s climbing the corporate ladder using a Magic 8 Ball

I feel like Elon still uses a magic 8 ball to make decisions.

I don’t think it will, at least not to the extent that some past tech trends like blockchain did. Right now companies are still in the “throw AI at everything and see what works” phase, which will definitely pass. But even if AI never improves from this point I still suspect it will find a permanent place being used for generating spam and porn.

The earth’s atmosphere will burst from an abundance of CO2 first from all these dumbass LLMs

No Hole Unfilled!!

“Any hole’s a goal” is a relationship goal, not a social one!

It’s not gonna burst, at least not the way I think you mean. Expectations will eventually come down to earth but everybody will still keep scraping human-produced content and train LLMs on it and generate stuff with it. That genie is out of the bottle and it’s here to stay.

As long as they stop trying to treat it like a hammer and literally everything else like a nail, I’ll take it. It’s nfts all over again.

Same

I informed my SecOps team and they reached out to Slack. Slack posted an update:

We’ve released the following response on X/Twitter/LinkedIn:

To clarify, Slack has platform-level machine-learning models for things like channel and emoji recommendations and search results. And yes, customers can exclude their data from helping train those (non-generative) ML models. Customer data belongs to the customer. We do not build or train these models in such a way that they could learn, memorize, or be able to reproduce some part of customer data. Our privacy principles applicable to search, learning, and AI are available here: https://slack.com/trust/data-management/privacy-principles

Slack AI – which is our generative AI experience natively built in Slack – is a separately purchased add-on that uses Large Language Models (LLMs) but does not train those LLMs on customer data. Because Slack AI hosts the models on its own infrastructure, your data remains in your control and exclusively for your organization’s use. It never leaves Slack’s trust boundary and no third parties, including the model vendor, will have access to it. You can read more about how we’ve built Slack AI to be secure and private here: https://slack.engineering/how-we-built-slack-ai-to-be-secure-and-private/

Sooo… they’re still gonna do it. But it’s ok because they promise to keep it separated from other stuff. 🙂

They could have made that clearer. Still not great though

Surprise, surprise. 😆